By manoj.kumar · February 7, 2026

Health systems sit on more data than ever before. Clinical systems, claims, labs, devices, and digital front doors all generate streams of information. If your teams cannot move, transform, and trust this data, care stalls and operational decisions slow down. Scalable healthcare data pipelines give you the structure to turn raw inputs into timely, usable insights without constant rework or firefighting.

You need an approach that respects regulation, spans legacy and cloud, and supports both near real time and batch needs. This guide walks through what scalable healthcare data pipelines are, why they matter, how to design them, and how to avoid common failure points so your health system can act on data with confidence.

What Are Data Pipelines in Health Systems

Health system data pipelines are the repeatable flows that move data from source systems to target systems, while applying validation, normalization, and transformation. They give you a managed path instead of ad hoc extracts and one off integrations.

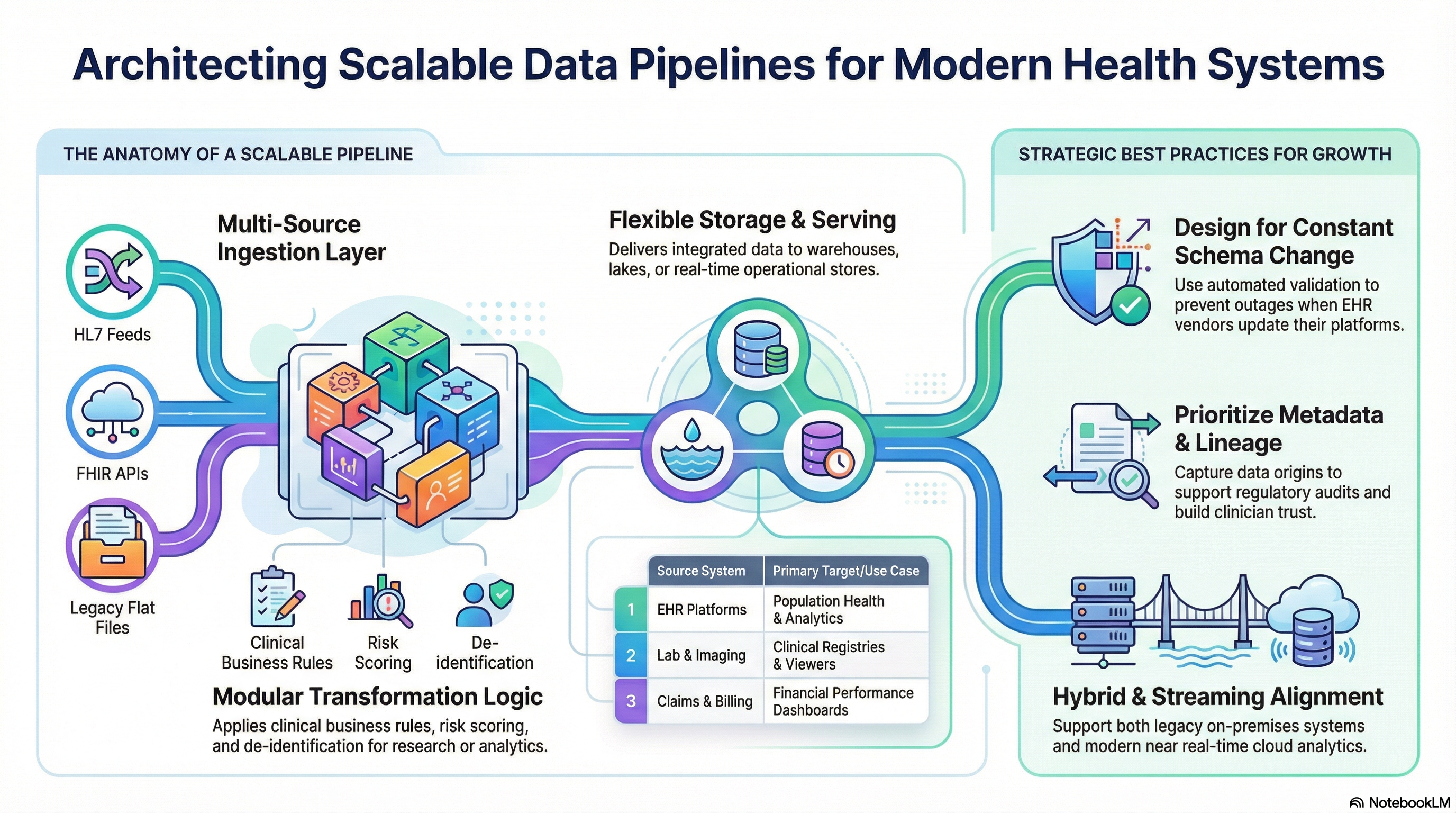

In a health system, data pipelines often connect:

• EHR platforms to analytics environments or population health tools

• Lab and imaging systems to clinical viewers or registries

• Claims and billing systems to financial analytics platforms

• Patient engagement tools to customer relationship systems

• IoT and remote monitoring devices to care management systems

Scalable healthcare data pipelines take these flows and design them for growth, change, and reliability. They support higher volumes, more sources, and new use cases without constant refactoring. They also respect healthcare specific requirements around privacy, data standards, and auditability.

A typical healthcare ETL pipeline in a health system will:

• Extract data from EHRs, ancillary systems, external partners, and files

• Transform and standardize data, including code sets and date formats

• Load data into warehouses, data lakes, analytics platforms, or operational stores

When you view data movement as a set of intentional health system data pipelines, you gain control, traceability, and the ability to scale.

Why Scalability Matters for Healthcare Data Pipelines

Healthcare data volume and complexity keep growing. New sources appear, from telehealth platforms to patient apps and devices. Regulatory reporting expectations become stricter. Analytics teams need fresher data and broader coverage. Without scalable healthcare data pipelines, each new requirement introduces risk and delay.

Healthcare data pipeline scalability affects several critical areas.

Clinical decision support and care quality

Clinicians need timely, accurate data in workflows. If your pipelines cannot process new feeds or surges in volume, downstream alerts lag or fail. Scalable data pipelines healthcare wide support more sources of clinical context for safer, more informed decisions.

Operational efficiency and financial performance

Revenue cycle, staffing, and supply chain teams rely on analytics and dashboards built on your pipelines. If those pipelines throttle under load or break during peak periods, leaders lose visibility into performance. Scalable healthcare data pipelines protect analytics continuity even as new systems or sites come online.

Regulatory and partner reporting

Health systems must report to payers, state agencies, and federal programs. External partners also expect consistent feeds. Healthcare data pipeline scalability ensures you can expand to new reporting requirements and new partners without rearchitecting every time.

Innovation and data driven initiatives

Population health, precision medicine, and digital front door initiatives all strain data infrastructure. When your scalable healthcare data pipelines are reliable, teams can test new ideas faster and operationalize successful ones without large integration rebuilds.

Core Components of Scalable Healthcare Data Pipelines

A scalable pipeline is more than a simple ETL job. It is a set of modular components that each play a clear role. Understanding these building blocks helps you evaluate current capabilities and design future state healthcare data engineering in a deliberate way.

Source ingestion layer

The ingestion layer handles intake from diverse systems and formats. In health systems this often includes:

• HL7 v2 feeds from EHRs, labs, and ADT systems

• FHIR APIs from modern clinical and digital health systems

• Flat files from external partners

• Databases and message queues

Scalable healthcare data pipelines use connectors and adapters that handle protocol specifics but expose consistent configuration and monitoring. This isolates source complexity from downstream logic.

Staging and normalization

Incoming data passes through a staging area where it is validated, profiled, and normalized. For health system data pipelines, this often includes:

• Schema validation and required field checks

• Standardization of identifiers across systems

• Mapping of clinical concepts to standard vocabularies where appropriate

• Handling of time zones and date formats

This step protects downstream systems from bad data and creates a consistent foundation for healthcare data pipeline architecture.

Transformation and business logic

Transformation steps apply business rules, aggregations, and calculations. For healthcare ETL pipelines, examples include:

• Deriving episodes or encounters from event streams

• Attributing patients to providers or care teams

• Building risk scores and quality measure flags

• Deidentifying data for research or analytics environments

In scalable data pipelines healthcare programs need transformation logic that is modular, versioned, and testable. This supports safe change as contracts, regulations, and practices evolve.

Storage and serving layer

The storage layer holds integrated data and serves it to consumers. Common patterns include:

• Enterprise data warehouses for structured analytics

• Data lakes for semi structured and unstructured data

• Operational data stores for near real time operational use

• Specialized marts for population health, finance, or quality

Healthcare data pipeline architecture should separate compute from storage where possible and support different performance tiers for different workloads.

Orchestration, monitoring, and observability

Orchestration tools coordinate pipeline steps so they run in the right order with the right dependencies. Monitoring and observability show health, performance, and failures. For health systems, strong observability is critical because data issues can affect patient care and compliance risk.

Scalable healthcare data pipelines include:

• Centralized logging

• Alerting for failures and SLA breaches

• Metrics on throughput, latency, and error rates

• Traceability for regulatory and audit needs

Security, privacy, and governance

Data security and privacy controls must sit across the entire pipeline. Healthcare data engineering requires:

• Access controls aligned with roles and responsibilities

• Encryption in motion and at rest

• Data masking where needed

• Data lineage tracking

• Policies for retention and deletion

These controls must scale as new sources and consumers join the environment.

Architecture Considerations for Health Systems

Each health system has a unique mix of legacy systems, cloud initiatives, and partner relationships. Still, some architecture considerations apply across the board when you design scalable healthcare data pipelines.

Hybrid and multi environment support

Many health systems operate across on premises data centers and one or more clouds. Your healthcare data pipeline architecture should support:

• Connectivity to on premises EHRs and ancillary systems

• Secure transfer to cloud environments where analytics and modern services run

• Clear patterns for data residency and sovereignty constraints

A hybrid aware design keeps options open as your strategy evolves.

Batch and streaming alignment

Some use cases tolerate daily or hourly refresh. Others, like sepsis alerts or ADT based notifications, need streaming or near real time patterns. Scalable data pipelines healthcare programs often blend:

• Streaming pipelines for high value, time sensitive events

• Batch pipelines for large, non urgent workloads

• Shared transformation logic across both modes where possible

Designing for both from the start reduces duplication and surprises when needs grow.

Standardization around interfaces and contracts

To keep health system data pipelines maintainable, define standard contracts at key points. For example:

• Canonical patient identifiers and demographics structures

• Common encounter and visit schemas

• Standard event models for ADT, orders, results, and documents

These contracts reduce point to point mappings and make onboarding new systems faster.

Domain driven design

Healthcare data pipeline architecture benefits from domain boundaries, such as:

• Clinical data domain

• Financial and claims domain

• Operations domain

• Patient engagement domain

Align your models, ownership, and pipelines around these domains. This supports independent evolution, clearer accountability, and reduced coupling.

Vendor and tool neutrality

Tooling will change. Migrations will occur. Scalable healthcare data pipelines should use patterns and abstractions that keep you from binding logic too tightly to one vendor. Examples include:

• Version controlled transformation logic in code

• Clear interfaces between ingestion, processing, and serving layers

• Metadata and configuration stored in portable formats

Best Practices for Building Scalable Data Pipelines

Once you understand the components and architecture decisions, the next step is practice. The following approaches help your teams design and operate scalable healthcare data pipelines that stand up to real world use.

Start with concrete use cases and SLAs

Align pipeline design with clear outcomes. Examples include:

• Near real time ADT feeds for care coordination and alerts

• Daily integrated clinical and claims data for population health analytics

• Regular extracts for payers, registries, or government programs

For each use case, define quality and timeliness expectations. Healthcare data pipeline scalability is easier to design when you know the service level you need to hit.

Design for schema change and source churn

Clinical and operational systems change. New fields appear. Old codes retire. Vendors upgrade platforms. To support this reality:

• Use schema evolution features where available

• Add validation rules that identify breaking changes quickly

• Separate source specific logic from core domain models

Health system data pipelines that expect change from the start avoid outages when upgrades happen.

Automate testing and deployment

Treat healthcare ETL pipelines as software, not one time projects. Introduce:

• Unit tests for transformation logic

• Integration tests for end to end flows

• Automated deployment pipelines with approvals where required

This improves reliability and shortens the time from idea to production while protecting patient safety and compliance.

Invest in metadata and lineage

Metadata tells you what data you have, where it came from, and how it changed. Strong lineage helps you:

• Trace issues back to sources and logic

• Support audits and regulatory questions

• Increase trust in analytics and dashboards

Scalable healthcare data pipelines integrate metadata capture into every step instead of bolting it on later.

Align teams around shared standards

Technical excellence does not help if teams work in silos. For healthcare data engineering efforts, create:

• Shared coding and modeling standards

• Reusable components for common transformations

• Regular design reviews across data, clinical, and compliance stakeholders

This keeps growth aligned and avoids an environment of many similar but incompatible pipelines.

Monitor what matters to the business

Do not limit monitoring to technical metrics. For each critical health system data pipeline, track indicators that leaders care about, such as:

• Data freshness against promised schedules

• Completeness of key fields

• Success of outbound feeds to partners

Tie alerts to these metrics so you respond to issues before they reach clinicians, patients, or regulators.

Common Challenges and How to Address Them

As health systems modernize data infrastructure, similar challenges appear. Planning for them in your healthcare data pipeline architecture helps you avoid expensive detours.

Fragmented legacy integrations

Many organizations rely on a mix of custom scripts, point to point interfaces, and manual file transfers. These are fragile and hard to scale. To address this:

• Inventory existing integrations and classify them by risk and importance

• Move critical flows to a managed integration and pipeline platform

• Retire or consolidate redundant connections

Stepwise migration reduces disruption while raising overall reliability.

Limited in house data engineering capacity

Skilled healthcare data engineering talent is scarce. Teams often juggle support tickets, new demands, and strategic projects at the same time. Consider:

• Standardizing on a smaller set of tools and patterns

• Automating repetitive work like data quality checks and common transforms

• Partnering with experts who specialize in scalable healthcare data pipelines and integration

Data quality and trust issues

If clinicians and leaders do not trust data, adoption stalls. Causes include inconsistent identifiers, missing values, and conflicting definitions across systems. To strengthen trust:

• Introduce data quality checks at ingestion and transformation stages

• Agree on shared definitions for metrics and entities

• Publish data dictionaries and lineage views for key datasets

Security and privacy concerns

Security teams often slow or block data initiatives because they see gaps in controls. Engage them early. For scalable data pipelines healthcare programs should:

• Align on encryption, access control, and logging standards

• Classify datasets by sensitivity level and apply controls accordingly

• Regularly review audit logs and access patterns

When security trusts the pipeline design, approvals move faster and risk falls.

Vendor lock in and rigid platforms

Some integration or analytics platforms lock business logic into proprietary formats. This makes change painful. To reduce exposure:

• Keep transformation logic in reusable, version controlled code

• Use open formats for data at rest

• Design healthcare ETL pipelines so switching tools at one layer does not force a full rebuild

Conclusion

Scalable healthcare data pipelines give your health system a durable foundation for clinical, operational, and strategic decisions. When data moves in consistent, governed, and extensible ways, teams respond faster to new requirements, integrate new partners with less friction, and reduce the risk of failures that affect patient care.

Health system data pipelines succeed when they blend strong architecture with practical execution. You need ingestion patterns that respect the reality of HL7 and FHIR, transformation logic that codifies your clinical and business rules, monitoring tied to outcomes, and security woven into every layer. With this in place, healthcare data pipeline scalability becomes a property of your environment, not a constant struggle.

Vorro helps health systems design and operate scalable healthcare data pipelines that span legacy systems and modern platforms. From integration patterns to healthcare ETL pipelines and managed orchestration, Vorro focuses on outcomes, reliability, and long term flexibility so your teams can focus on care and strategy instead of plumbing.

FAQs

What is a scalable healthcare data pipeline

A scalable healthcare data pipeline is a structured set of processes and tools that move, validate, transform, and deliver clinical and operational data in a way that supports growth in volume, sources, and use cases without constant redesign. It supports both current and future needs while maintaining performance and reliability.

How is a healthcare ETL pipeline different from general ETL

A healthcare ETL pipeline follows the same extract, transform, and load pattern as other ETL systems but works with clinical, financial, and operational data subject to strict privacy, regulatory, and quality requirements. It needs to support standards such as HL7 and FHIR, clinical code systems, and detailed audit trails.

Why do health systems need both batch and streaming data pipelines

Batch pipelines work well for large, periodic workloads such as nightly refreshes for analytics and reporting. Streaming pipelines support use cases that need near real time information, such as ADT alerts or monitoring programs. Health systems often need both approaches within the same scalable healthcare data pipelines strategy.

How do we improve healthcare data pipeline scalability in a legacy environment

Improvement starts with a clear inventory of existing integrations and priority use cases. From there, move critical flows onto a managed, observable pipeline platform, standardize patterns for ingestion and transformation, and phase out fragile point to point scripts. Partnering with specialists in healthcare data engineering can accelerate this shift.

What should we monitor in health system data pipelines

You should monitor technical indicators such as throughput, errors, and latency, along with business focused metrics such as data freshness for key datasets, completeness of critical fields, and success rates for external feeds. These views help you detect and fix issues before they affect clinicians, patients, or external partners.

If you want scalable healthcare data pipelines that support your health system strategy, reduce integration friction, and increase data trust, explore how Vorro partners with organizations like yours to design and run healthcare data engineering programs that deliver results.